Scientists Create Speech Neuroprosthesis, Helping Paralyzed People Communicate

Scientists have successfully developed a speech neuroprosthesis for the first time to help a man with severe paralysis communicate. Researchers at UC San Francisco created a technology that allows you to communicate in complete sentences. The system translates signals from your brain to the vocal tract into words that appear on a screen.

More than a decade of research by UCSF neurosurgeon Edward Chang, MD, led to this groundbreaking discovery. His work created a technology that would allow people with paralysis to communicate in alternative ways. The study appeared on July 15, 2021 in the New England Journal of Medicine.

Dr. Chang, Chair of Joan and Sanford Weill Neurological Surgery at UCSF, Distinguished Professor Jeanne Robertson and lead author of the study, had this to say:

“To our knowledge, this is the first successful demonstration of direct whole-word decoding of the brain activity of someone who is paralyzed and unable to speak. It shows great promise in restoring communication by harnessing the brain’s natural speech machinery. “

Strokes, neurodegenerative diseases, and accidents cause anarthria (the loss of the ability to speak) in thousands of people a year. However, the researchers believe that their technology could help these people communicate more naturally and efficiently in the future.

How the communication neuroprosthesis helps people with seizures

Previous research in communication neuroprostheses aimed to help patients communicate with just spelling. These approaches involved writing letters one at a time in the text, relying on cues to move the arm to write. On the other hand, Chang’s study focuses on translating the signals that control the muscles of the vocal tract to pronounce words. Chang says that this method allows for more natural and fluid communication.

Dr. Chang noted that spelling-based approaches using keyboarding, typing, and cursor control are considerably slower and more time consuming.

“With speech, we normally communicate information at a very high rate, up to 150 or 200 words per minute. Going straight to words, as we are doing here, has great advantages because it is closer to how we normally speak ”.

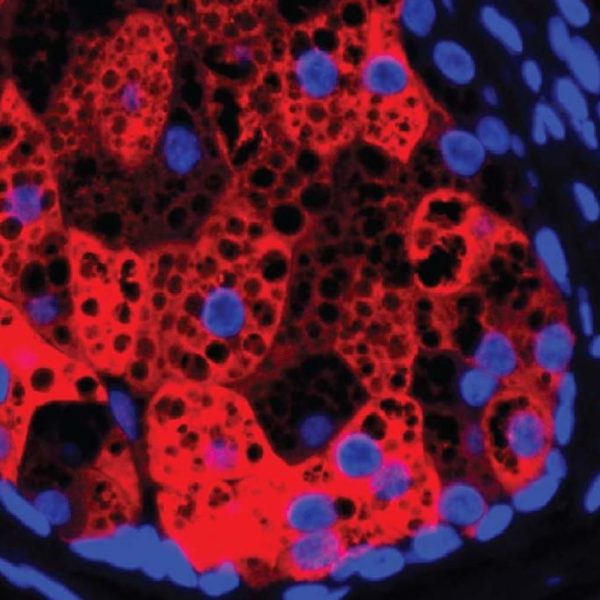

For the past ten years, Chang’s research involved seizure patients with normal speech at the UCSF Epilepsy Center. They underwent neurosurgery so that doctors could understand the cause of their seizures. The patients had electrode arrays placed in their brains to track electrical activity. Before surgery, they volunteered to analyze these recordings for speech-related activity.

Next, Chang and his colleagues at UCSF’s Weill Institute for Neurosciences mapped the brain patterns associated with the movements of the vocal tract responsible for speech. The team then worked on translating the brain activity into whole word speech recognition. This responsibility fell to David Moses, Ph.D., a postdoctoral engineer in Chang’s lab and one of the lead authors of the new study. He created new techniques to decode the patterns in real time along with statistical language models to increase accuracy.

This study was fruitful, so Chang wanted to replicate it in people with paralysis. However, the team realized that this time they faced more difficult challenges. The technology worked well before, but they weren’t sure how it would work in a person with vocal cord paralysis.

Dr. Moses said this:

“Our models needed to learn the mapping between complex patterns of brain activity and predicted speech. That poses a great challenge when the participant cannot speak ”.

Not to mention, the researchers were unaware of the state of brain signals that control the patients’ vocal tracts. Would they still work in people who haven’t been able to use their vocal muscles for years?

“The best way to find out if this might work was to try it,” Moses said.

The study showing how neuroprosthesis of speech helps paralyzed people to communicate

For the study, Chang collaborated with his colleague Karunesh Ganguly, MD, PhD., Associate professor of neurology. Together, they launched “BRAVO” (Restoration of the brain-computer interface of the arm and voice). The first volunteer in the study, known as BRAVO1, is a man in his 30s. More than 15 years ago, he suffered a severe brain stem stroke which caused massive damage to his brain, vocal tract, and limbs.

Since her stroke, she has had minimal mobility of her head, neck, and extremities. It communicates using just a pointer that presses letters on a screen.

For this study, the participant worked together with the researchers to formulate a 50-word vocabulary. Chan’s team would monitor and recognize words from brain activity using advanced computer algorithms. The vocabulary includes simple words like “good”, “water” and “family”. The algorithms can then use these words to create hundreds of sentences to help BRAVO1 express its thoughts.

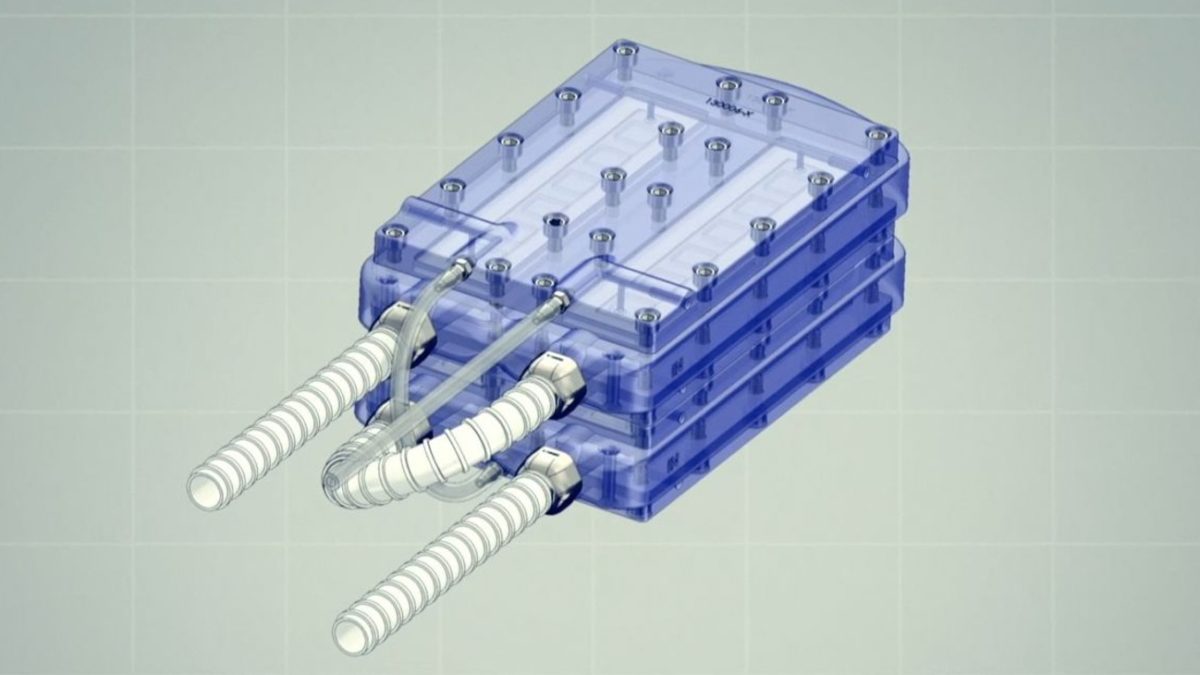

After that, Chang surgically implanted a high-density electrode array over the motor cortex of BRAVO1’s speech. After BRAVO1 made a full recovery, the team recorded 22 hours of brain activity over the course of 48 sessions. The recording sessions lasted several months. In each session, BRAVO1 made several attempts to say each vocabulary word. At the same time, the electrodes recorded signals from his motor cortex for speech.

How Speech Neuroprosthesis Converts Brain Signal Words

Other senior study authors used custom neural network models to translate speech intent into text. Forms of artificial intelligence, the networks helped record subtle brain patterns to detect speech attempts. The system then identified what words BRAVO1 was trying to say.

To test the models, the team gave BRAVO1 short sentences made up of the 50 vocabulary word bank. Then they asked him to try saying them a few times. When he tried to speak, the decoded words from his brain activity were recorded on a screen.

The team then started asking him questions like “How are you doing today?” or “Do you want some water?” BRAVO1’s responses then appeared on the screen. He said, “I’m fine” and “No, I’m not thirsty.”

The speech neuroprosthesis decoded words from brain activity at a rate of about 18 words per minute. Impressively, it was 93 percent accurate (median 75 percent). The language model that Moses created also played a role in its success. The system had a self-correcting function similar to modern smartphones and speech recognition software, which increased accuracy.

“We were excited to see the accurate decoding of a variety of meaningful sentences,” Moses said. “We have shown that it is actually possible to facilitate communication in this way and that it has the potential to be used in conversational settings.”

Final thoughts on the speech neuroprosthesis that improves communication in paralyzed people

In the future, Chang and Moses will conduct a follow-up test to include more volunteers with severe paralysis. However, the team is working to expand vocabulary words and improve speaking speed for now.

While the study only included one participant and a 50-word vocabulary, both scientists rated it as a great success. Finally, Moses said: “This is an important technological milestone for a person who cannot communicate naturally and demonstrates the potential of this approach to give voice to people with severe paralysis and loss of speech.”